The Farm Labor Apps You've Been Looking For

Our industry-leading farm labor management apps deliver real time labor and production data directly from the field to the office.

- Easily collect accurate labor and production data from your fields in real time!

- Pay your employees quickly and correctly with minimal effort!

- Month-to-month pricing. No contracts required!

- Quick and easy setup. Get started with your labor management solution today!

"FieldClock paid for itself during the first harvest season we used it."

-Dave Martin, Dave Martin Farms

We know our platform is a great value, which is why we can publish our prices with confidence.

Manage your farm like a boss using our suite of labor management features

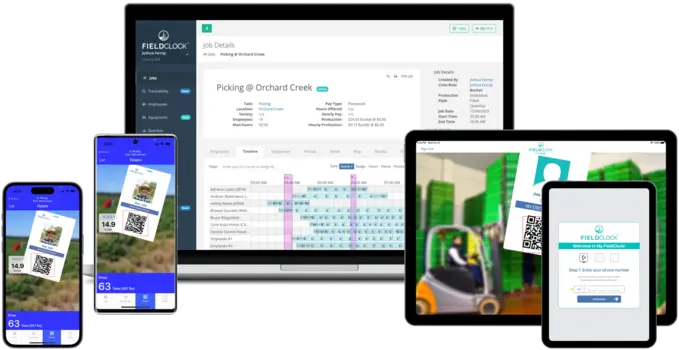

FieldClock & Kiosk

Our apps make farm labor management and data collection simple and effective. FieldClock is designed for the rigors of outdoor work and the technical skills of all users.

It's as easy as pointing a camera!

Easily export payroll to commonly used payroll systems

FieldClock is integrated with over two dozen of the most popular payroll platforms on the market, allowing farmers to export payroll in seconds with the click of a button.

Block-level accounting is a breeze with our wide variety of reporting options

Why farmers choose FieldClock for labor management and data collection

No Maintenance

Never worry about managing backups or whether your server is up to date. Even when you aren't connected to Wi-Fi, all data is saved to the device itself, then synced with our servers and accessible on your Admin site almost instantly.

Modern

No clunky proprietary hardware required. FieldClock is available directly in the App Store and Google Play. The latest and greatest version is always available and there is no upcharge.

Flexible

Every farm's labor and data management needs are unique. FieldClock can be configured to show the options that your employees need without the clunkiness of other apps.

Freedom

We hate the thought of annoying sales reps trying to get you to prepay for years of service. FieldClock offers month-to-month pricing plans so you can use our labor management solution without feeling like you're trapped in a long-term contract.

What farmers are saying about FieldClock

"It was very obvious that the app was created by orchardists for orchardists."

"FieldClock has cut our time in half, if not more, on payroll entries. "

Meet some of the farmers saving time and money with the help of FieldClock

Case study from a FieldClock client

SITUATION

Dave Martin Farms was a small farm using paper and punchcards to track time and piece rate work.

SOLUTION

Switching to FieldClock removed the need to round up time and piece counts and also saved back-office time that was spent counting punchcards and entering time.

IMPACT

Dave put it best: "FieldClock paid for itself during the first harvest...my wife and I now save about 100 hours each during harvest."

Learn how much you're likely to overpay with the old ways

Many farmers think that pen and paper (and punchcards) is the cheapest way to manage their labor. In reality, overpayment and liability are much more expensive than our labor tracking services.

Answers to some frequently asked questions

Does the app work without internet?

Yes! The FieldClock app is designed to work in "offline mode" for those remote fields with no cellular coverage. Data will be uploaded when the phones return to cellular signal or wifi.

Do I need special hardware?

Nope! FieldClock is built for commodity iOS and Android devices that you can buy from your local store or online.

Does the app work in Spanish?

Yes! All of our apps work in English and Spanish and more languages can be easily added if needed. We also take great care to make sure the mobile apps are usable by anybody -- no tech experience required!

Do I have to pay for upgrades?

Never! FieldClock is unique in offering our apps directly in the App Store and Google Play. You will always have access to the latest and greatest version.

Need more clarification?

Our sales team is available to answer your questions and demo how FieldClock can help your farm

Stay posted on what FieldClock is up to

Be the first to know about new features and other ways FieldClock is leading farm labor tech forward.